1. Use the hugemem kernel in Linux RedHat 3.0 AS enterprise version.

2. Set up the shm file system or the ramfs filesystem to the size you want the upper memory for Oracle, plus about 10% or so.

3. Set use_indirect_data_buffers to true

For example:

· Mount the shmfs file system as root using command:

% mount -t shm shmfs -o nr_blocks=8388608 /dev/shm

· Set the shmmax parameter to half of RAM size

$ echo 3000000000 >/proc/sys/kernel/shmmax

· Set the init.ora parameter use_indirect_data_buffers=true

· Startup oracle.

However there are catches.

In recent testing it was found that unless the size of the memory used in the PAE extended memory configuration exceeded twice that available in the base 4 gigabytes, little was gained. However, the testing performed may not have been large enough to truly test the memory configuration. The test consisted of replay of a trace file through a testing tool to simulate multiple users performing statements. During this testing it was found that unless the memory is to be set at twice to three times the amount available in the base configuration using the PAE extensions may not be advisable.

Memory Testing

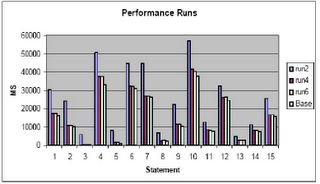

In this chart I show:

Base Run: Run with 300 megabytes in lower memory

Run2: Run with 300 megabytes in upper memory

Run4: Run with 800 megabytes in upper memory

Run6: Run with 1200 megabytes in upper memory

In my opinion do not implement PAE unless larger memory sizes must be implemented than can be handled by the normal 4 gigabytes of lower memory. Unless you can double the available lower memory, that is, for a 1.7 gigabyte lower db_cache_size, to get similar performance, you must implement nearly a 3.4 gigabyte upper memory cache, you may not see a benefit, in fact, your performance may suffer. As can be seen from a comparison of the base run (300 megabytes lower memory) to run2 (run1 was to establish base load of the cache) performance actually decreased by 68% overall!

As you can see, even with 1200 megabytes in upper memory we still don’t get equal to the performance of 300 megabytes in lower memory (performance is still 7% worse than the base run). I can only conjecture at this point that the test:

1. Only utilized around 300 megabytes of cache or less

2. Should have performed more IO and had more users

3. All of the above

4. Shows you get a diminishing return as you add upper memory.

I tend to believe it is a combination of 1,2 and 4. There may be addition parameter tuning that could be applied to achieve better results (setting of the AWE_MEMORY_WINDOW parameter away from the default of 512M). If anyone knows of any, post them and I will see if I can get the client to retest.

1 comment:

Mike,

In this context, i would like to know about extending RAM on 32-bit Windows advanced servers.

i have a windows adv server box having 4 GB RAM .

Which would be better 4GT (/3g) or PAE switch in boot.ini?

Thanks in advance

Laks

Post a Comment